Moral Pluralism through the lense of optimization

| Created | |

|---|---|

| Tags | EthicsPhilosophy |

In this short piece I want to discuss one idea that helped me to better understand the idea of Moral (Value) Pluralism. I stumbled upon this concepts from ethics in the last meeting of my Student Philosophy Society where we discussed Two Levels of Pluralism (PDF) by Susan Wolf.

Moral Pluralism in a nutshell (according to Wolf)

So as some of you might know there is a traditional divide in ethics between absolutism (e.g. Kant, Utilitarianism) and relativism (and maybe more divides I don't even know of!).

Susan Wolf puts the distinction this way:

For the issue of objectivity of values is often posed in terms of such questions as, "Is there a true view of morality?" and "Of two conflicting views, is at least one of them wrong?" To these questions, the relativist answers "no"—there is no single right answer, no true moral view; the absolutis answers "yes"—there is always a right answer, given by the single correct moral system. The pluralist can be expected to answer "sometimes"—for even if some situations will set irreducibly different values into oppositions that cannot rationally be resolved, there is no reason to expect that all, or most, moral issues will be of this sort.

So for the pluralist, the answer to some moral questions is not relative but instead indeterminate,

as Wolf phrases it in her paper.

My interpretation

So I struggled with the definitions and analogies of pluralism that Wolf offered while reading the paper, and I wasn't the only one in my philosophy group. I understood the words she was saying but couldn't make sense of how this is really different.

And when I can't understand something I tend to just throw frameworks at it that I am familiar with and that are powerful, such as machine learning/optimization, evolution, physics...

So that's what I did.

Function optimization

I thought of absolutism, relativism and pluralism as three ways to approach the optimization of a function (=finding its maximum value(s)). I am sure this analogy is not perfectly thought through and might cause some frustration in "real" philosophers. But hopefully it is helpful to others out there.

The function that is optimized could be seen as the goals of a moral theory (e.g. happiness, freedom, peace...), or also more formally as a function that maps an action to its moral value.

Now the absolutist would say that there is one correct function to optimize and that there is also one set of inputs that gives us the maximal function value. This "set of input values" could be interpreted as his moral theory, e.g. that to get the maximum moral value one has to follow Kant's Categorical Imperative.

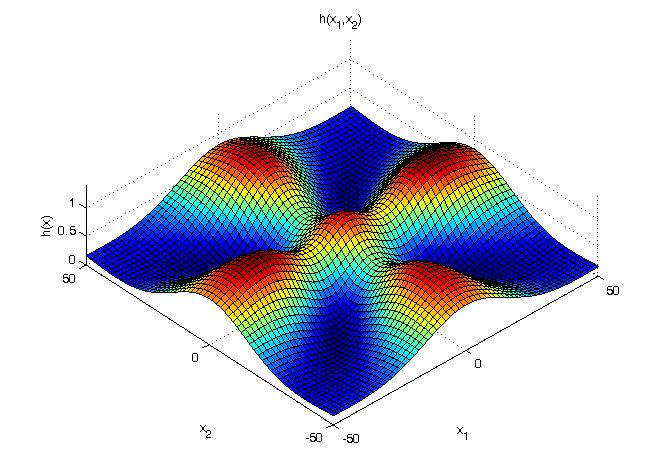

But now the pluralist comes along and says that for some situations, there are indeed several inputs that give us (approximately) the same moral value. So there is no correct answer for an ideal action!

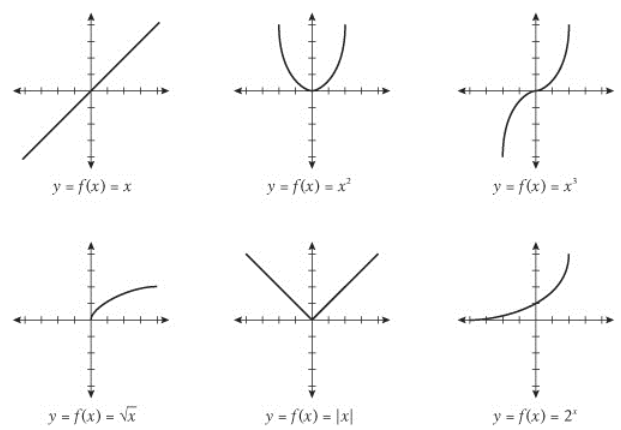

And finally the relativist points out that the choice of function is already arbitrary. Why not optimize another function?

So that was my first article on this website. I hope you enjoyed it!